The UX & Cybersecurity Case for More Local AI Hardware

Earlier this week, two things crossed my path:

- A fantastic breakdown of AMD's latest laptop processors that feature their XDNA AI engines.

- Intel's Innovation Days presentation, which showcased their new processors with local AI hardware (Neural Processing Units - NPUs).

Both of these are great things in my opinion. Let's get into why.

How Do People Use AI?

The simplest possible explanation of the AI systems in the current zeitgeist is that they are pattern recognition and prediction machines. These Large Language Models (or LLMs) are trained on massive amounts of data. (We won't get into the ethical or artistic issues with this. If you want that, watch the following video:)

The user will use some kind of interface - typically text based, but some visual interfaces do exist. The LLM will process the input, figure out the pattern of that input, and then predict the output based on that pattern. (This, incidentally, leads to the "hallucination" problem, where LLM based AI generate stuff that has no actual basis in the prompt or fact.)

There are two main ways these LLM based AI operate:

- On massive server farms.

- On very expensive graphics cards or special processors.

The Cybersecurity Issues

Like it or not, everyone is going to be using LLM based AI in the near future, because if you know how to use them well, you can get some impressive productivity gains.

It also doesn't take a genius to recognize the problems here, especially in the context of the CIA triad:

- Confidentiality

- Integrity

- Availability

Confidentiality, or keeping data out of the hands of anyone who is not supposed to have access, runs into a number of problems when you have server based LLMs:

- Can you keep the data confidential if you have to use someone else's LLM servers?

- Can you ensure that the LLM provider isn't going to incorporate the data into their LLM's training model?

- Increased risk of bad actors intercepting data as it moves between the end user PC and the server.

- Leaky or improperly routed VPNs delivering the data to outside actors.

- Increased opportunity for corporate competitors to track your progress by assessing volume of traffic between company PCs and LLM servers.

And these are just the obvious confidentiality issues that I thought of in five minutes. Things are just as bad when we look at Integrity:

- Data manipulation of the input to the LLM as it travels to the server from the end user.

- Data manipulation of the output from the LLM as it travels from the server to the end user.

- Data corruption due to connection issues between the LLM server and end user.

- Data corruption due to unannounced changes in how the LLM processes data.

When it comes to availability, we've got a whole new set of problems:

- Stability of connection between the end user and LLM server.

- Availability of LLM server resources.

- Potential DDOS of the LLM server/port.

- Local hardware being expensive and hard to obtain.

- Local hardware availability.

- Local hardware connection issues.

The UX Issues

The UX issues are honestly a near 1:1 overlap with the Cybersecurity ones, plus a few new ones:

- The inconvenience of having to sign up for an account.

- Limited options to customize the interface.

- Limited ability to use LLMs optimized to specific tasks.

- Limited ability to use LLMs with little to no training/output bias.

- Random service issues when LLM load is high.

Essentially, AI as tool has a lot of hurdles because it's not practical to run on a local machine. But what if we could?

The Two Paths

There are two main paths to get more local AI hardware in people's hands. The first is building it into new computer processors, like AMD and Intel are doing. These are focused on "inference," which is just a fancy way of saying "taking input into a trained LLM and generating output."

(Note: Intel's AI solution is called Neural Processing Units, but I will refer only to AI engines, because it does the same thing and is easier to remember.)

There are two main ways to do this:

- Devote a small part of the overall processor to AI functions.

- Devote a large part of the overall processor to AI functions.

Currently, Intel and AMD are releasing products that take the first way to do things. This makes sense from a risk mitigation point of view, but also constitutes a huge bet on the part of the hardware designers. They're not only betting on getting the design right, but that the total performance is going to be good enough for most of the lifespan of the CPU.

When you're a corporation that can justify replacing entire computers (or just the CPUs, if you're lucky) every 3-4 years, that's an acceptable risk. For an individual or small organization, the upsides are pretty high, but if you rely on AI for a lot of tasks and the programming for LLMs suddenly changes in a way your AI engine/other PC hardware can't handle, you're in a big pickle.

The other way to do it is to make a big chunk of the CPU an AI engine, like so:

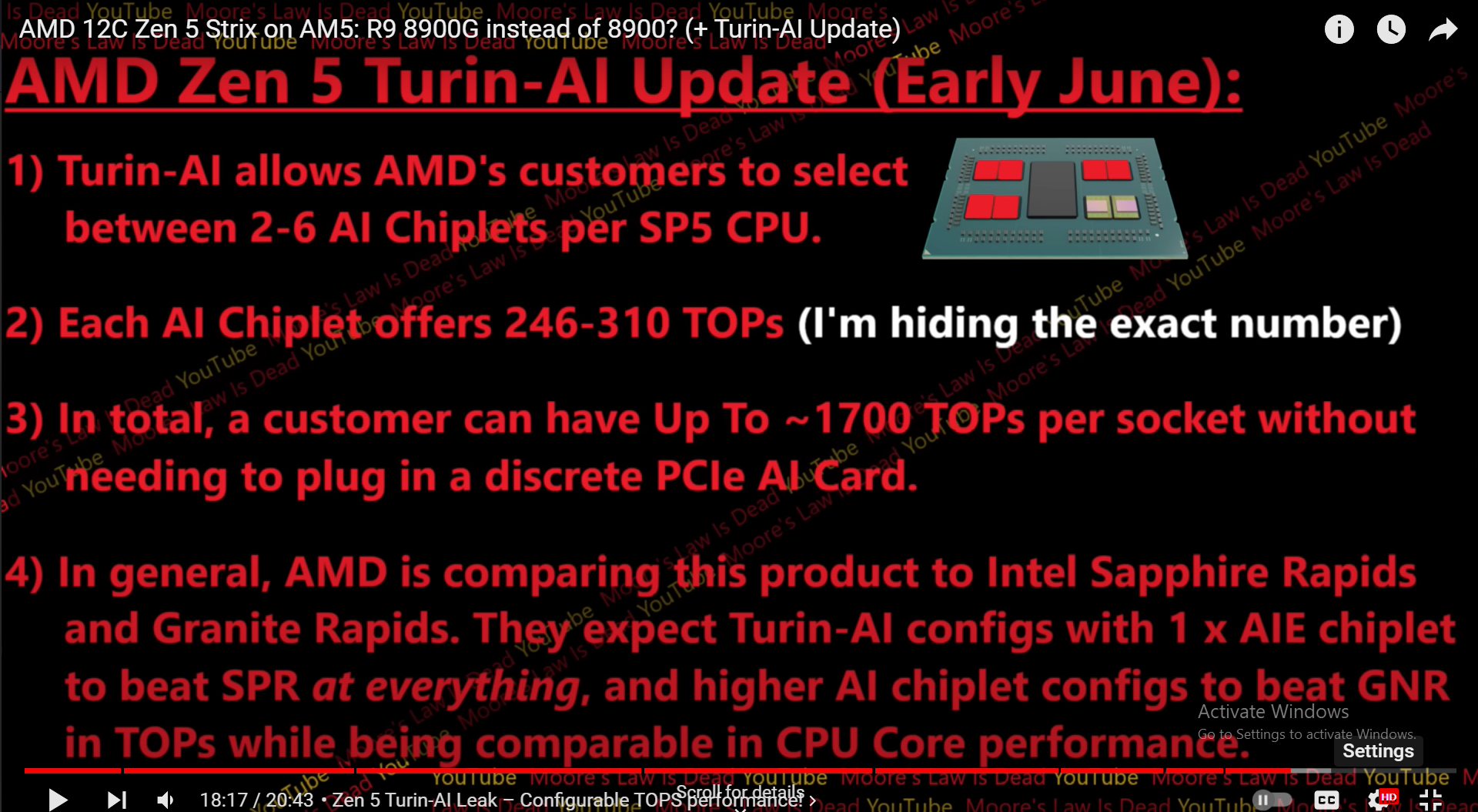

According to tech commentary and analysis channel Moore's Law is Dead, AMD has developed a chiplet that provide more than enough performance for any individual's AI tasks.

For those unfamiliar with how AMD's CPUs are constructed, a chiplet is a small silicon chip with features that would form part of a single larger CPU. So in AMD's case, the actual processor cores are in one or more chiplets, while all the parts that communicate with the rest of the computer are in another. AMD designs its chiplets so that they can work across server, workstation, and desktop PC products.

With AI hardware in high demand, AMD is certainly going to make AI chiplets for servers. But workstation and desktop are a big question mark, especially since servers get the top tier chiplets. Theoretically, even the worst tier AI engine chiplets would provide excessive amounts of LLM AI task performance for even prosumer users.

Will AMD make such a CPU? Maybe. Go let AMD's social media accounts know you want that.

And this leads us to the second path, the M.2 add-on card.

In case you don't know what an M.2 anything is, here's an example:

If something the size of a stick of gum that could handle all your AI engine needs sounds attractive, you are not alone. As it turns out, the company behind Intel's AI engine has actually made these devices... they are just being sold to industrial firms. But the fact that they're aware that people want them means there's a chance we can get them down the line.

What's especially exciting about the idea of an AI engine on an M.2 is the fact that it gives people options. It's not a huge component, or something that's going to be stuck under a heat sink/watercooling solution that's a pain to disassemble. You could stick them in any computer with an M.2 drive, even if the performance wouldn't be great for older PCs. You could potentially have two or more side-by-side in a special adapter that would give you tons of AI processing capabilities.

And this is before we even get into the possibilities of combining the two paths by using both CPU integrated and M.2 add-on AI engines.

Wrap Up

To sum it all up, more local AI hardware solves a number of cybersecurity problems:

| Confidentiality | Integrity | Availability |

| Keeping data confidential. | Data manipulation of LLM input enroute to LLM. | Stability of connection between the end user/LLM server. |

| Risk of LLM developer incorporating confidential data in training model. | Data manipulation of LLM output enroute to end user. | Availability of LLM server resources. |

| Interception of data enroute to LLM. | Data corruption due to issues in LLM/end user connection. | Potential DDOS of the LLM server/port. |

| Improper VPN setups leaking data/delivering data to wrong destination. | Data corruption due to unannounced LLM changes. | Local hardware availability. |

| Less traffic for competitors to analyze. | Local hardware connection issues. |

It also provides opportunities to solve the following UX problems:

- The inconvenience of having to sign up for an account.

- Limited options to customize the interface.

- Limited ability to use LLMs optimized to specific tasks.

- Limited ability to use LLMs with little to no training/output bias.

- Random service issues when LLM load is high.

With two main paths to getting more local AI hardware, either as parts of CPUs or in standalone add-on cards, the future looks bright for local AI. But it'll take a decent amount of yelling at the social media clouds to get everyone the hardware they deserve. And without that hardware being available, there's not much incentive or ability to work on the UX problems.

So let's get that social media campaign going, and